Why Apple Is Taking a Different Path in the AI Race—and How Rivals Are Reacting

The AI notepad for people in back-to-back meetings (Sponsor)

Looking for an AI notetaker for your meetings?

Granola is a lot more.

Most AI note-takers just transcribe what was said and send you a summary after the call.

Granola is an AI notepad. And that difference matters.

You start with a clean, simple notepad. You jot down what matters to you and, in the background, Granola transcribes the meeting.

When the meeting ends, Granola uses your notes to generate clearer summaries, action items, and next steps, all from your point of view.

Then comes the powerful part: you can chat with your notes. Use Recipes (pre-made prompts) to write follow-up emails, pull out decisions, prep for your next meeting, or turn conversations into real work in seconds.

Think of it as a super-smart notes app that actually understands your meetings.

Download Granola and try it for your next meeting.

Apple’s approach to artificial intelligence stands in stark contrast to the cloud-centric strategies of competitors like Google, OpenAI, and Microsoft. By prioritizing on-device processing, ironclad privacy, and custom M-series silicon, Apple is carving a path optimized for consumer trust and everyday utility rather than raw cloud scale.

This strategy, crystallized in Apple Intelligence, has forced rivals to adapt through partnerships, feature parity pushes, and hardware innovations as of early 2026.

Apple’s Core Pillars: On-Device, Privacy, Silicon

At the heart of Apple’s divergence is on-device AI. Unlike cloud-reliant systems that beam user data to distant servers, Apple runs lightweight foundation models directly on iPhones, iPads, and Macs. This delivers sub-second latency for tasks like text summarization, image generation, and notification prioritization—crucial for seamless user experiences without internet dependency.

This ties into a privacy-first positioning. Apple Intelligence processes sensitive data locally via the Neural Engine, with “Private Cloud Compute” handling overflow on anonymized servers that Apple cannot access. No training data is retained, addressing post-ChatGPT privacy scandals that eroded trust in Big Tech AI.

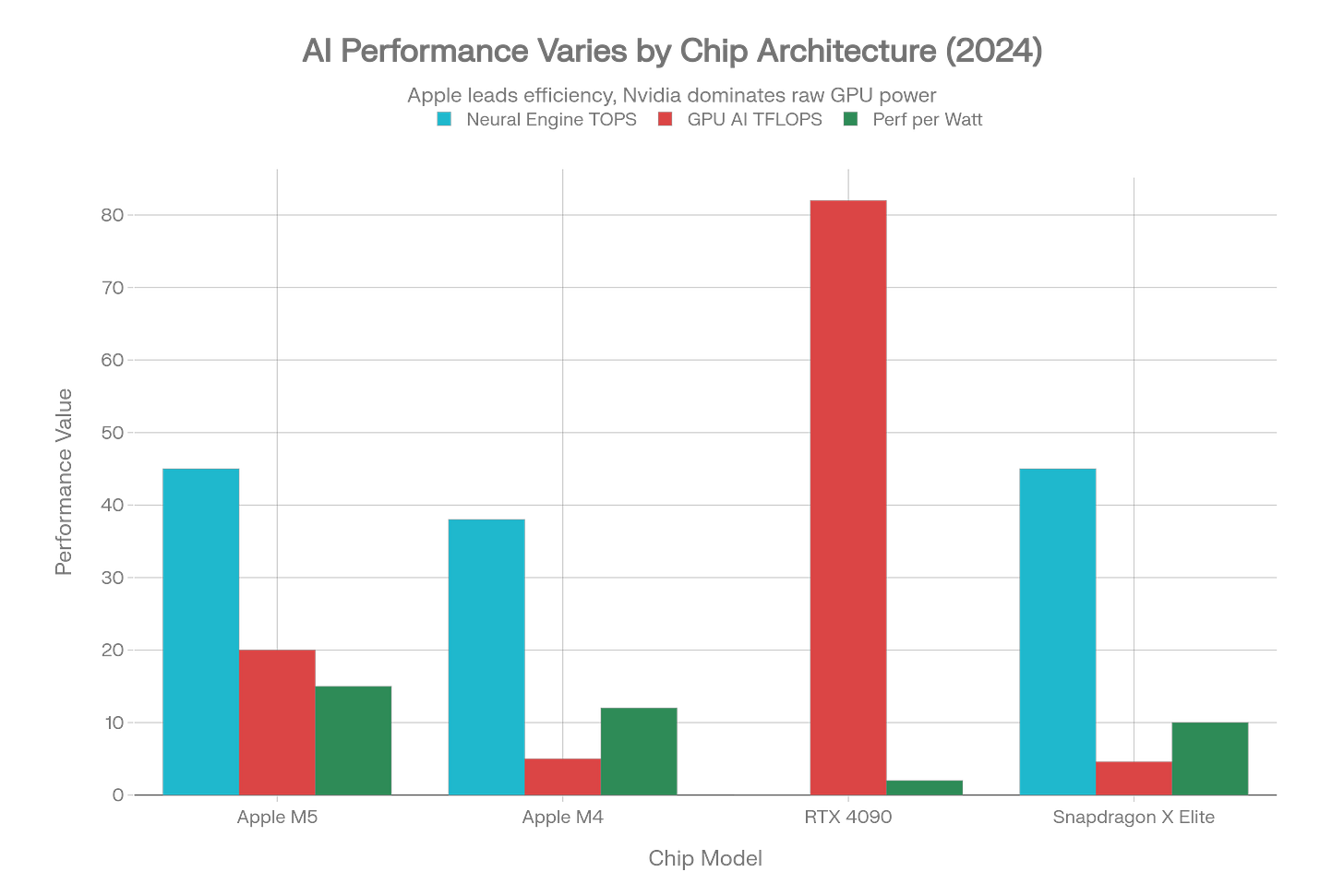

Powering it all is Apple’s M-series silicon advantage. The unified memory architecture and dedicated AI accelerators in chips like M4 and the October 2025-launched M5 enable massive efficiency gains. The M5’s 16-core Neural Engine hits around 45 TOPS (trillion operations per second), with 4x the GPU AI compute of M4 and 30% more memory bandwidth—all while sipping power for all-day battery life.

This chart illustrates Apple’s edge: M5 and M4 excel in Neural Engine TOPS and performance-per-watt, outpacing power-hungry Nvidia GPUs for mobile AI workloads.

These pillars aren’t just technical; they’re a moat. Apple’s ecosystem—1 billion+ active devices—creates a flywheel where AI enhances hardware stickiness, boosting services revenue without ads or data sales.

Recent Momentum and 2026 Roadmap

Apple Intelligence hit prime time in late 2025, expanding to all developers via the Foundation Models framework in June. This lets third-party apps tap on-device models for free (with opt-in cloud boosts), sparking an app ecosystem boom.

The M5 announcement marked a leap: enhanced Siri with conversational depth, multi-tasking, and multimodal inputs, rolling out spring 2026. Leadership hires like Amar Subramanya underscore bets on custom foundation models and safety guardrails.

Analysts forecast payoff in 2026. Apple’s “infrastructure-first” bet commoditizes cloud LLMs, positioning it for personal AI dominance. C-level trust in AI jumped 54% post-launch, validating the privacy play amid regulatory scrutiny.

Yet challenges linger: delayed features risked perceptions of lag, and Siri must prove less “hallucinatory” than rivals.

Competitor Responses: Partnership, Parity, Pivot

Rivals aren’t standing still. Google’s dual-track response exemplifies the scramble.

Google’s Hybrid Counterplay

In January 2026, Google unveiled “Personal Intelligence” in its Gemini app—a direct Apple Intelligence clone offering contextual task help, image gen, and proactive suggestions for U.S. subscribers first. This challenges Apple head-on in consumer AI, leveraging Gemini’s multimodal prowess.

Yet collaboration trumps pure rivalry. A January 11 multi-year deal integrates Gemini into Apple Foundation Models and Siri, enhancing complex queries while routing through Private Cloud Compute. Reports call it multi-billion-dollar, signaling Apple “ditching” prior OpenAI ties for Google’s scale.

This lets Google tap Apple’s ecosystem for cloud revenue while accelerating Gemini Nano on-device models for Android. Gemini now rivals Apple in Workspace integrations, narrowing the productivity gap.

OpenAI’s Hardware Gambit

OpenAI’s story is pivot-heavy. Its 2024 partnership embedded ChatGPT as an opt-in Siri sidekick—no data stored, user-initiated only. But by 2026, Apple shifted to Google, prompting OpenAI’s aggressive moves.

The $6.4B io acquisition and Jony Ive partnership aim at AI-native hardware challenging iPhones—think screenless, voice-first devices with edge-optimized models. OpenAI eyes smaller, deployable LLMs to mimic Apple’s on-device wins, though its cloud DNA creates friction.

Sam Altman has hinted at “device intelligence” revolutions, validating Apple’s thesis while racing to diversify beyond Azure dependency.

Microsoft’s Cross-Platform Push

Microsoft supercharges Copilot as a ubiquitous AI layer across Windows, Office, and mobile. Unlike Apple’s walled garden, Copilot emphasizes app-agnostic delivery, positioning for enterprise wins where privacy regs favor hybrids.

Copilot+ PCs with NPUs ape M-series efficiency, but Apple’s integration depth remains unmatched.

Implications for Tech Players

Apple Intelligence isn’t abstract—it’s reshaping careers and strategies for tech’s core roles. Drawing from enterprise AI shifts, here’s how leaders, builders, and orchestrators must adapt.

For Founders: Moats in Privacy and Ecosystems

Founders face a golden window but razor-sharp risks. Apple’s Foundation Models framework hands free access to on-device LLMs, enabling privacy-centric apps—like health trackers analyzing local biometrics or enterprise note-takers with zero-cloud compliance—that monetize via App Store’s 30% cut and AI-driven retention.

Build moats around “Apple-adjacent” niches: self-healing productivity agents or multimodal creators optimized for M5. US startups like those in Snowflake ecosystems show 35% cost wins by aligning with on-device trends, per 2026 summits. Pivot roadmaps: Q1 audits for Neural Engine compatibility; Q2 pilots with Private Cloud opt-ins.

Challenges? Ecosystem lock-in squeezes Android/web plays. Founders must hybridize—Gemini for cross-platform—while dodging commoditization. Success: Anthropic-like safety niches elevated by Apple’s standards; failure: irrelevance without edge efficiency. Philippine founders eyeing remote US roles gain via SEO-optimized AI tools, mirroring your content flywheels.

For Engineers: Skill Shifts to Edge Mastery

Engineers, your stack evolves fast. Apple’s M-series demands quantization, unified memory tuning, and Neural Engine ops—benchmarks show M5 outpacing Nvidia in perf/watt for inference. Prototype self-healing pipelines: monitor drift, predict failures, remediate via Monte Carlo patterns, slashing firefighting 90% for AI focus.

Key upskilling: Core ML over PyTorch for deployment; multimodal fusion (vision+language) for Siri-like apps. Walmart-scale US deploys prove AI-readiness audits yield 90% faster analytics—engineers owning lineage from ETL to agents thrive.

Headwinds: GPU talent floods Apple’s specialists. Remote PH engineers target M-series certs for AU/NZ visas, leveraging your cloud infra expertise for hybrid roles. Roadmap: Q1 lineage tools; Q3 govern multimodal at scale.

For Product Managers: Lifecycle Ownership in AI

PMs, reprioritize ruthlessly. Apple’s path mandates privacy SLAs first—offline viability, temporal drift checks—turning roadmaps into “lifecycle ownership”: track from features to production agents. Balance walled-garden magic (e.g., Siri extensions) with cross-platform via Gemini/Copilot APIs.

Actionable: Q1 AI-readiness audits; Q2 agent pilots syncing Apple rollouts. US execs like Bridgestone cut 35% costs via mature infra—PMs driving this compound ROI. In PH tech scenes, optimize for remote US PM gigs emphasizing behavioral interviews on AI ethics.

This triad—founders niche, engineers edge, PMs govern—mirrors data eng revolutions: mature infra unlocks agentic futures.

The Road Ahead: Efficiency Trumps Scale?

Apple’s bet: AI succeeds where it’s invisible, reliable, and private—not flashy demos. On-device dominance could redefine the race, making cloud a feature, not foundation. Rivals’ responses—Google’s entente, OpenAI’s hardware moonshot—acknowledge this.

Critics decry Apple’s caution as missed cloud revenue, but Q1 2026 earnings suggest otherwise: AI-fueled services growth amid hardware stability. As M5 MacBooks and iPhones ship, expect ecosystem lock-in to tighten, forcing Android/PC makers to match silicon strides.

In a post-privacy-scandal world, Apple’s path may prove prescient. Tech players must adapt: build for edge, embed privacy, partner modularly. The AI race isn’t won by biggest model, but best integration—for founders scaling moats, engineers mastering silicon, and PMs owning lifecycles

PARTNER WITH US

Tech Scoop lands in the inboxes of 50,000+ tech leaders and engineers — the kind who build, ship, and buy.

No fluff. No noise. Just high-impact visibility in one of tech’s sharpest weekly reads.

👉 Interested? Fill out this quick form to start the conversation.

You can also check out our sponsorship page here. For other collaborations, feel free to send me a message.