Human-Centered Explainability Is the Next Frontier of AI Security Interfaces

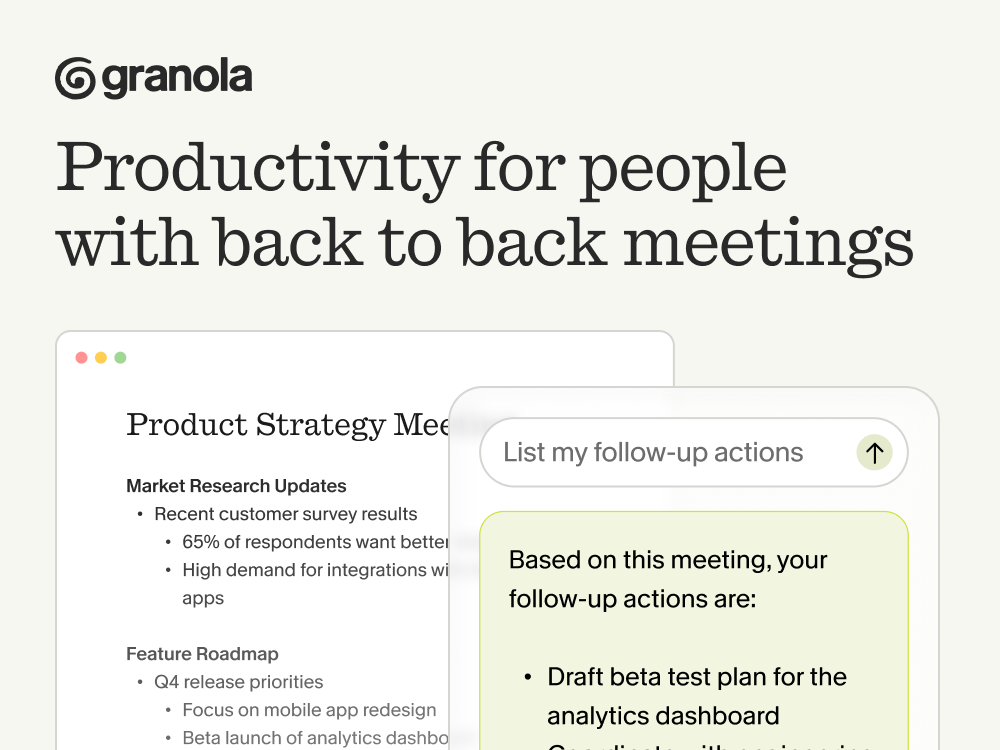

This issue is powered by Granola, the app that might actually make you love meetings.

With Granola, the AI Notepad for people with back-to-back meetings, you can avoid context switching, the cognitive load of remembering what you promised … and the stress of knowing something important slipped through.

Free 1 month with the code SCOOP

Enterprise cybersecurity is entering a new phase, one where AI copilots are no longer experimental features but core operational tooling.

Platforms like Microsoft Security Copilot and the expanding AI capabilities across the security platforms of Palo Alto Networks are rapidly automating tasks that historically consumed analysts’ time: alert triage, threat correlation, investigation summarization, and even remediation guidance. For Security Operations Centers (SOCs), this is a major shift. AI promises to collapse investigation time, reduce alert fatigue, and surface attack patterns that humans might miss.

But as many SOC teams are starting to realize, deploying AI in security workflows introduces a new challenge that isn’t purely technical—it’s cognitive.

When an AI copilot recommends isolating a host, flagging a lateral movement pattern, or escalating a potential credential compromise, the analyst staring at that dashboard has to decide in seconds whether to trust the system. If the AI behaves like a black box, two failure modes immediately emerge: analysts may either over-trust the recommendation and accept it blindly, or under-trust it and ignore valuable signals.

In a high-stakes environment where minutes matter, both outcomes are dangerous.

This is precisely where human-centered explainability becomes critical. The emerging insight from recent research is that explainability in cybersecurity is less about the internals of the model and more about how the reasoning is surfaced through the interface the analyst actually uses.

Explainability Is a UI Problem

Traditional explainable AI research has largely focused on the model layer. Researchers have spent years developing techniques like feature attribution, saliency maps, attention visualization, and other ways to interpret what happens inside neural networks.

Those methods are valuable for model auditing, but they rarely map cleanly to real operational workflows.

SOC analysts are not inspecting gradients or feature contributions while triaging an alert queue. Their mental model is closer to investigative reasoning: What happened? Why does the system think it’s malicious? What would change the conclusion?

The gap between technical explainability and operational usability is where many AI systems fail to gain traction. An algorithm might technically expose its reasoning, but if the interface presents that explanation poorly—or overloads the analyst with irrelevant details—the result is still confusion.

In practice, explainability succeeds or fails at the user interface layer.

Four Explanation Strategies That Shape Analyst Decision-Making

To explore how explanation design affects security workflows, researchers prototyped four explanation strategies within a SIEM-style dashboard. Each one represents a different philosophy for communicating AI reasoning.

Confidence Visualization

The simplest approach is to expose probability estimates or risk scores. These appear as visual indicators—color gradients, confidence bars, or probability percentages—that communicate how strongly the AI believes an alert is malicious.

This approach is extremely efficient. Analysts can scan dozens of alerts quickly and prioritize the highest-confidence threats.

The drawback is that confidence alone doesn’t answer the question analysts instinctively ask: why?

A confidence score without context risks being interpreted as a black-box assertion.

Natural Language Rationale

The second approach attempts to bridge that gap with structured natural-language explanations. These resemble the short investigative summaries many analysts already write manually.

For example:

“This alert is likely malicious due to abnormal lateral movement activity originating from a compromised workstation and matching known credential-dumping patterns.”

These explanations are typically generated through templated reasoning structures—observation, inference, and conclusion.

Natural language explanations dramatically improve readability, particularly for junior analysts or teams under time pressure. However, they introduce a subtle risk: language can sound authoritative even when the underlying reasoning is uncertain. If phrased confidently, these explanations may inadvertently encourage over-trust.

Counterfactual Explanations

A more advanced approach introduces counterfactual reasoning: allowing analysts to explore how the model’s decision would change if certain inputs changed.

For instance:

“If the source IP reputation score were neutral rather than malicious, the alert risk would drop from 78% to 42%.”

Interactive controls—sliders, toggles, or expandable panels—allow users to manipulate variables and observe how the risk assessment shifts.

This technique aligns strongly with how engineers and analysts think. Instead of being handed an answer, they can test the system’s logic directly. The trade-off is cognitive load; interacting with counterfactual explanations requires more time and attention.

Hybrid Explanations

The most effective design combines these approaches using progressive disclosure. Analysts first see a quick confidence signal, then optionally expand the interface to reveal natural language explanations and counterfactual exploration tools.

This layered model mirrors how investigations actually unfold: quick triage first, deeper reasoning only when necessary.

What the Data Reveals About Analyst Behavior

In controlled experiments involving both experienced analysts and trained participants, explanation style significantly affected performance across several metrics: accuracy, task completion time, cognitive workload, and trust calibration.

Hybrid explanations produced the strongest results overall. Participants achieved the highest decision accuracy and the most balanced level of trust when explanations were layered rather than singular.

Counterfactual explanations also proved highly effective, particularly for complex alerts where understanding causal relationships mattered. Participants spent significantly more time interacting with these elements, suggesting deeper cognitive engagement with the AI’s reasoning.

Natural language explanations, while the fastest to process, showed signs of encouraging premature acceptance of AI recommendations. Analysts tended to accept the explanation at face value rather than challenge the reasoning.

The key insight is that explanation depth influences not only understanding but also behavior.

Engineers and Analysts Want Control, Not Just Answers

One of the most revealing patterns in the study is how analysts interacted with the system. When given the opportunity to manipulate assumptions or test hypothetical scenarios, users spent more time exploring the interface.

This behavior reflects a broader truth about technical professionals: they trust systems more when they can interrogate them.

An explanation that simply states a conclusion does not build trust. An explanation that allows users to test the reasoning does.

In other words, explainability should shift from passive explanation to interactive verification.

Design Principles for SOC Interfaces

Several design principles emerge from these findings that are particularly relevant for enterprise security platforms:

Explanations should follow progressive disclosure. Analysts operating under alert fatigue need quick signals before they need deep explanations. Interfaces should allow users to drill down only when necessary.

Different components of reasoning should remain distinct. Confidence scores answer the question of how certain the system is. Natural language explanations address why the system reached that conclusion. Counterfactual tools allow analysts to explore how the conclusion might change. Combining these prematurely can overload users.

Uncertainty should be visible. Binary classifications like “malicious” or “benign” hide the probabilistic nature of machine learning systems. Visualizing uncertainty—through probability ranges or gradients—helps analysts calibrate trust more accurately.

Systems should enable interactive validation. Analysts should be able to experiment with inputs, explore causal factors, and verify assumptions rather than simply reading a static explanation.

Explanation design should reflect user roles. Junior analysts often benefit from natural-language reasoning, while senior analysts and incident responders tend to prefer deeper investigative controls like counterfactual simulations.

What This Means for Security Vendors

For companies like Microsoft and Palo Alto Networks, the competitive frontier in AI security tooling may soon shift away from raw model performance.

The next differentiator will likely be how effectively AI reasoning integrates into analyst workflows.

Security teams already operate under immense cognitive pressure. Interfaces that reduce uncertainty and support analytical reasoning will outperform systems that merely generate automated recommendations.

This means that the future of AI security tools will depend as much on UX and interaction design as it does on machine learning architecture.

Beyond Cybersecurity

While the study focuses on SOC environments, its implications extend to any high-stakes domain where AI assists human decision-makers.

Healthcare diagnostics, financial fraud detection, autonomous operations, and risk analysis all face the same core challenge: people must understand and trust AI outputs before they act on them.

Accuracy alone does not guarantee adoption. Systems must communicate their reasoning in ways that align with human decision-making processes.

The Real Shift Happening in AI Systems

AI copilots are rapidly becoming the operational backbone of modern enterprise infrastructure.

But their effectiveness will ultimately depend on something far more human than algorithms.

The next phase of AI development is not just about building smarter models—it’s about designing interfaces that allow humans to reason alongside those models.

And in cybersecurity, where every alert could represent a real attack, that human-AI collaboration may determine whether organizations respond in minutes—or after the damage is already done.