Anthropic’s High-Wire Act: Balancing Scale, Safety, and Survival in the AI Race

B2B auth that grows with your business (Sponsor)

Adding auth to your product starts out simple, but can quickly spiral into a ton of feature requests that distract you from your main mission.

With PropelAuth, you can add common auth features with just a click of a button, like:

Organizations

Roles & permissions

Enterprise SSO (SAML/OIDC)

Custom security policies

MCP support

Don’t get bogged down fighting with subpar auth tools - try PropelAuth today.

Anthropic is squeezed between its founding commitment to cautious, safety-first AI development and the relentless demands of a hyper-competitive market dominated by OpenAI and Microsoft.

As revenue forecasts soar to $26 billion in 2026 and enterprise tools proliferate, the company grapples with escalating capital needs and shifting alliances that blur partner and rival lines.

This tension tests Anthropic’s ability to scale without sacrificing its “moral high ground.”

Let’s dissect Anthropic’s strategic maneuvers, the forces straining its identity, and the profound implications—including tailored takeaways for key tech industry players.

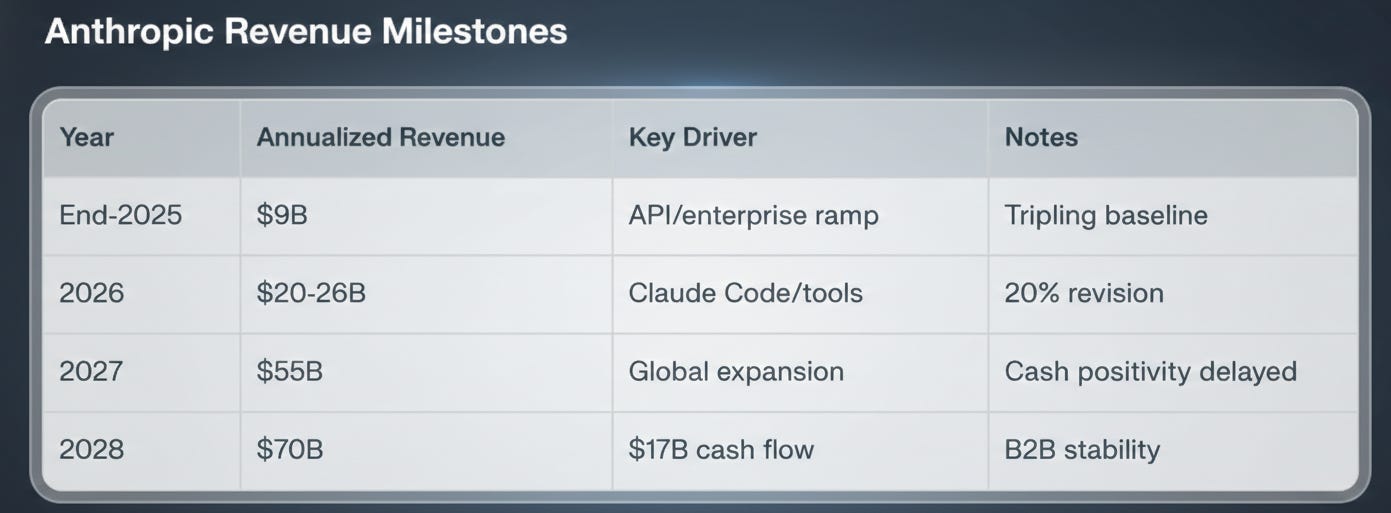

Explosive Revenue Ambitions Fuel Enterprise Pivot

Anthropic’s growth trajectory has stunned observers, targeting nearly $9 billion in annualized revenue by end-2025, ballooning to $20-26 billion in 2026—a near tripling—with recent 20% hikes pushing 2027 toward $55 billion and $70 billion by 2028. About 80% stems from 300,000+ enterprise clients via APIs and Claude Code, which hit a near-$1 billion run rate post-launch. This B2B focus delivers predictable revenue amid infrastructure debates.

Safety Identity Under Market Strain

Founded in 2021 by ex-OpenAI leaders, Anthropic’s “Constitutional AI” embeds ethics into Claude models, prioritizing throttled reliability over speed.

Capital from Amazon/Google ($6B total) fuels scaling, but these partners now compete, demanding faster launches. Safety investments delay cash-flow positivity to 2028, yet Anthropic bets this becomes a premium moat amid regs like EU AI Act.

Rivalry Heats: Partners Become Predators

Enterprise tools like Claude Enterprise target devs, clashing with OpenAI’s SMB push and Microsoft’s bundling. Super Bowl ads loom as Altman courts CEOs.

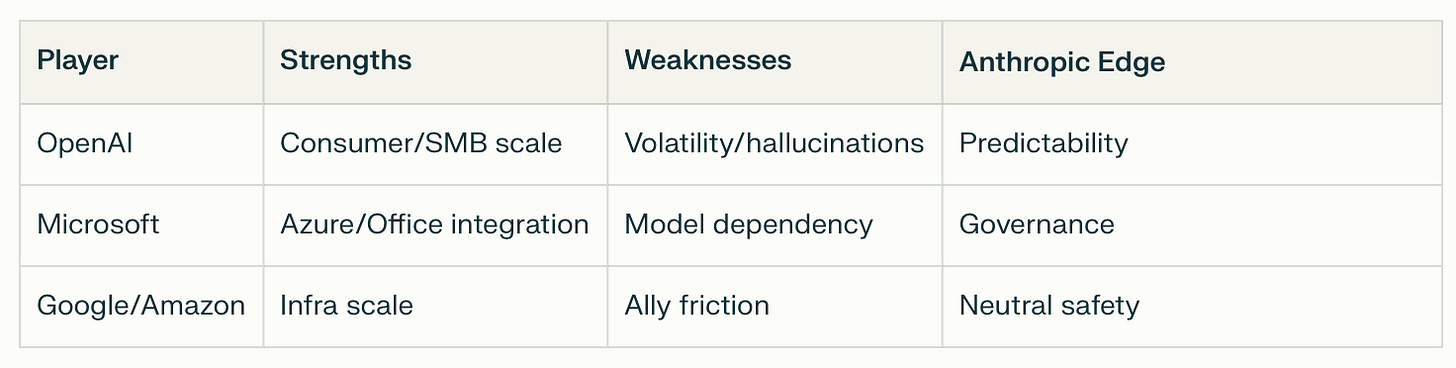

Competitive Landscape

What Anthropic’s Strategy Means for Tech Industry Players

Anthropic’s high-stakes balancing act reverberates across the tech ecosystem, forcing strategic recalibrations. Here’s how key players should respond, with actionable implications drawn from its enterprise surge and safety moat.

For OpenAI: Accelerate Enterprise Trust-Building

OpenAI dominates consumers but trails in Fortune 500 predictability—Anthropic’s 80% B2B revenue share signals vulnerability. Altman must invest heavily in compliance certifications and rate-limit transparency to stem defections.

Expect pricing wars; OpenAI could bundle GPTs with premium support to match Claude Code’s $1B run rate. Long-term, mimic “Constitutional AI” to neutralize safety critiques, or risk ceding high-margin enterprise to rivals.

Pivot: Launch audited enterprise tiers.

For Microsoft: Double Down on Ecosystem Lock-In

With Copilot embedded in Office/Azure, Microsoft faces Anthropic’s independent appeal amid antitrust scrutiny. Anthropic’s neutral governance attracts firms wary of vendor lock-in.

Counter by open-sourcing safety tools or partnering on hybrid clouds. If Anthropic hits $26B ARR, Microsoft’s $13B OpenAI stake looks shaky—diversify via Hugging Face acquisitions.

Opportunity: Use Azure dominance to subsidize Anthropic-like safety R&D, turning a threat into moat reinforcement.

For Amazon and Google: Navigate Investor-Rival Tensions

As $4B/$2B backers turned competitors, their Trainium/TPU chips power Anthropic but also Bedrock/Vertex rivals.

Anthropic’s scale-up validates their infra bets yet erodes model margins.

Strategy: Offer exclusive compute deals tied to multi-model platforms, blurring exclusivity. If safety becomes premium, prioritize interpretability in next-gen chips.

Risk: Antitrust probes deepen if Anthropic IPOs—proactively divest stakes for goodwill.

For Enterprise Software Giants (Salesforce, SAP, ServiceNow)

Anthropic’s Claude integrations signal AI agents disrupting workflows.

Players must embed safety-first LLMs natively, partnering with Anthropic over OpenAI for regulated sectors.

Upside: Co-develop vertical tools (e.g., finance compliance), capturing 20-30% margins from Anthropic’s pipeline.

Avoid: Pure reselling—build proprietary fine-tunes to own data moats.

For Hardware Leaders (NVIDIA, AMD, Broadcom)

Anthropic’s compute hunger ($10B+ annual burn) underscores $1T+ data center boom. NVIDIA’s CUDA lock persists, but Anthropic-Amazon ties boost Trainium/AMD alternatives.

Action: Accelerate inference-optimized chips with built-in safety accelerators. Winners diversify beyond training; losers face commoditization.

For Startups and VCs: Safety as New VC Pitch

Anthropic proves “responsible scaling” attracts enterprise capital—VCs should fund alignment startups (e.g., interpretability tools).

Founders: Position as “Anthropic for X” (e.g., robotics, edge AI). Avoid hype cycles; bake audits into roadmaps for acquirers like Microsoft.

For Regulators and Policymakers

Anthropic’s path pressures self-regulation—U.S./EU should incentivize safety benchmarks without stifling. If it succeeds, fast-track visas/tax breaks for ethical AI hubs; failure invites overreach.

Industry Impact Heatmap

This framework equips players to thrive amid Anthropic’s ascent.

Broader AI Landscape Implications

When leading AI companies prove that strong safety practices can coexist with strong commercial performance, safety stops being a differentiator and becomes a requirement. Enterprise buyers, regulators, and insurers start demanding it by default. That raises the floor for everyone:

Enterprises only adopt models that meet strict reliability, alignment, and auditability standards.

Regulators gain clearer norms and benchmarks, reducing policy uncertainty.

Investors price AI risk more predictably, stabilizing what could otherwise be volatile, trillion-dollar markets.

Fewer “cowboy” deployments mean less chance of rogue or misaligned systems causing outsized harm or backlash.

In this world, safety is embedded infrastructure — like cybersecurity or compliance — not a nice-to-have. That reduces tail risks and makes the ecosystem healthier and more competitive.

If safe, reliable models can’t compete commercially or technically, buyers gravitate to the largest incumbents who can brute-force scale, compute, and distribution. That leads to:

Consolidation around a handful of Big Tech players

Higher barriers to entry for startups

Less diversity of approaches and slower innovation

Safety potentially becoming secondary to speed and dominance

Ironically, concentration can increase systemic risk: fewer actors mean fewer checks and less resilience.

To make the “success path” concrete:

Q1: Win enterprise deals that demonstrate trust, reliability, and measurable safety → prove the business case.

Mid-year: Launch Claude 4.0 (or next-gen model) with clear capability + safety gains → show that cutting-edge performance and safeguards scale together.

The sequence is intentional: first establish market credibility with customers who value safety, then reinforce it with a major technical leap.